IntellaNOVA Newsletter #37: Revolutionizing AI and Data: From DeepSeek’s Affordable AI to Apache Spark’s Serverless Innovation and Meta’s Privacy Dilemma

DeepSeek: Why the Chinese AI Rival is Giving ChatGPT a Run for Its Money

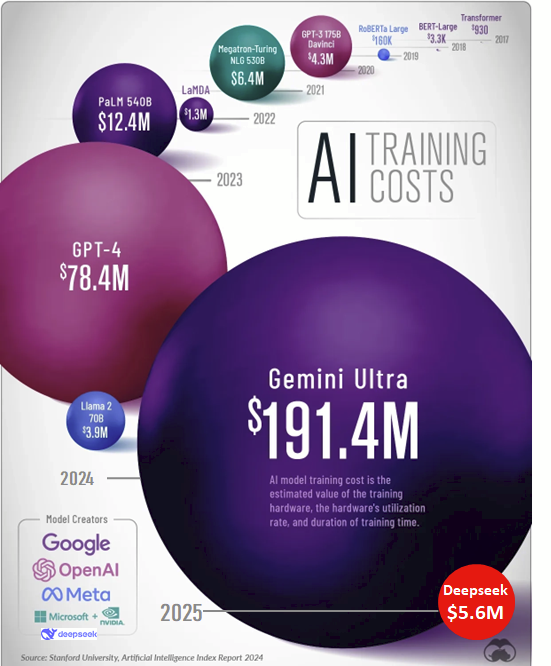

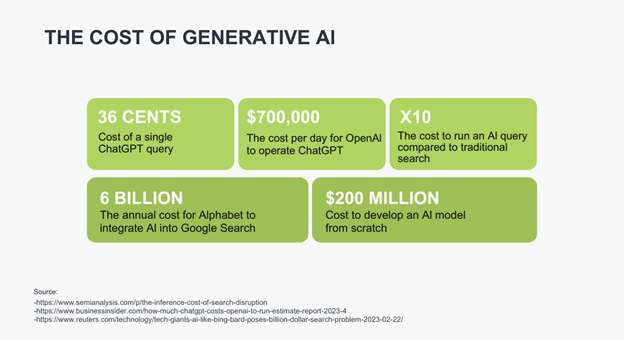

Chinese researchers have shaken the AI world with DeepSeek-R1, a groundbreaking reasoning model that rivals OpenAI’s ChatGPT. They have done so while achieving GPT-4-level performance at a fraction of the cost and development time. Developed in just two months for $5.6 million (vs OpenAI’s $5 billion annual budget), DeepSeek’s rapid progress has drawn serious attention. Even Microsoft’s Satya Nadella warned about China’s AI advancements. The model’s game-changing architecture includes DualPipe Optimization for maximizing GPU efficiency, Memory Light Attention for compressed yet effective multi-head attention, and Mixture of Experts for enhanced feed-forward efficiency, all contributing to its exceptional reasoning, coding, and mathematical capabilities. It has outperformed Meta’s Llama 3.1 and Alibaba’s Qwen2.5. Not only that, DeepSeek-R1 offers superior cost efficiency. At inference costs as low as $0.14 per million tokens, it is undercutting major competitors. Despite U.S. export restrictions on AI hardware, DeepSeek optimized its algorithms to achieve remarkable results using just 2,000 GPUs. This is far fewer than OpenAI’s 10,000. Furthermore, they have stockpiled Nvidia A100 chips. This success signals a paradigm shift in global AI development, challenging Silicon Valley’s dominance and proving that innovation doesn’t always require billion-dollar investments. As former Google CEO Eric Schmidt now acknowledges China’s rapid AI progress, DeepSeek raises critical questions about the U.S.’s competitive edge. He raised concern about the role of open-source models in disrupting closed-source giants, and the future of global AI leadership. By democratizing AI development through smarter algorithms and resourceful strategies, DeepSeek is redefining the race for AI supremacy.

Why DeepSeek’s AI is the Beginning of Affordable and Accessible AI

Artificial Intelligence (AI) is reshaping work, communication, and daily life, but its widespread adoption has been hindered by high costs — until now. DeepSeek, a Chinese AI lab, claims to have developed an advanced algorithm that drastically reduces computational requirements for training large language models (LLMs) by up to 95%, cutting costs from OpenAI’s estimated $80 million to just $5.6 million. This breakthrough has massive implications, lowering token processing costs to $0.10 from GPT-4’s $4.40 and making AI more accessible through an open-source MIT-licensed approach. While this efficiency threatens short-term demand for high-powered GPUs from companies like NVIDIA, the long-term expansion of AI applications remains promising. Consumers stand to benefit as affordable AI fuels innovation across industries like healthcare, education, and finance, while AI giants such as OpenAI, Meta, and Google face disruption from open-source alternatives. For AI tool builders, reduced operational costs will enable greater adoption and innovation, but security concerns around foreign AI platforms must be addressed. Technologies like Privacera AI Governance (PAIG) offer solutions to mitigate privacy and compliance risks. DeepSeek’s innovation marks not just a technological leap but a societal milestone, democratizing AI and challenging the dominance of industry giants. By making AI more affordable and scalable, DeepSeek is paving the way for a more inclusive future where cutting-edge AI is no longer limited to those with deep pockets.

Unleashing the Power of Data: How Apache Spark on EMR Serverless Transforms Big Data Workflows

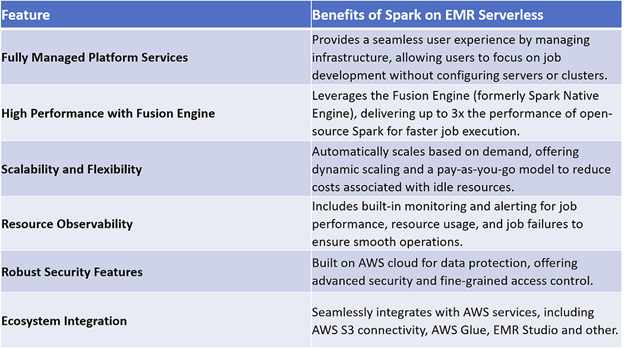

Organizations are increasingly adopting cloud-native, serverless solutions to optimize data workflows. One such powerful tool is Apache Spark on EMR Serverless — a fully managed, high-performance data processing service by AWS. EMR Serverless is designed for enterprises that want to focus on data analysis rather than infrastructure complexities. It offers seamless scalability, real-time monitoring, and advanced security integrations. With its Fusion Engine, it delivers up to 3x the performance of open-source Spark, ensuring faster job execution and reduced latency. The platform’s dynamic scaling allows businesses to efficiently handle varying workloads while only paying for the resources used, minimizing costs. Additionally, EMR Serverless integrates effortlessly with AWS services like S3, Glue, and EMR Studio, simplifying machine learning workflows and enterprise data management. With built-in security features, including Privacera for fine-grained access control, organizations can safeguard sensitive data while driving efficiency. By leveraging Spark on EMR Serverless, enterprises can streamline operations, optimize costs, and unlock the full potential of their data infrastructure.

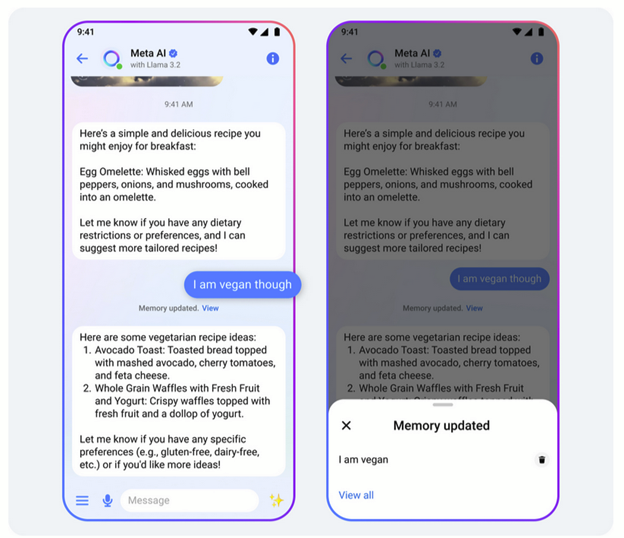

Meta AI’s New Memory Feature: Innovation or Another Data Privacy Nightmare?

Meta’s latest update to Meta AI introduces a memory feature that enhances personalization by recalling user preferences and pulling data from Facebook, Messenger, WhatsApp, and Instagram to offer tailored recommendations. While this advancement aligns with similar features in OpenAI’s ChatGPT and Google’s Gemini, Meta takes it further by leveraging cross-platform data to refine responses. CEO Mark Zuckerberg promotes this as a convenience, but given Meta’s history of privacy concerns, skepticism is inevitable. The biggest issue? There’s no opt-out. While users can delete memories, there’s no clear way to prevent Meta AI from continuously collecting data across its ecosystem. With no transparency on how long this information is stored or how it’s used beyond chat interactions, users may feel more uneasy than excited. This update underscores the ongoing tension between personalization and privacy. If Meta fails to establish trust and provide clear data controls, this innovation may spark another privacy backlash rather than a user experience breakthrough.